When creating machines and services in the cloud we can specify the authentication method for SSH. We can authenticate with a password or by using a public key. It’s very common to access your Linux machines with a SSH connection using the public-key mechanism because it’s more secure and convenient.

You should not share a single key for all your SSH connections. A good practice is to create a new key per ‘context’. For example, a new key for different clients and environments.

Set up a simple passphrase to encrypt your private key. When your private-key is stolen/leaked, it won’t be a direct threat to access your resources: they will also need your passphrase. ssh-agent can remember this passphrase, so you don’t need to type in the password every time you make a connection.

--- 2018 December update ---

A few weeks ago I discovered Chrome browser profiles and gained new insights to use socks-proxies. I’m using these profiles daily, which makes my life better. While updating this blog a colleague mentioned PAC, which I also decided to include. In the previous version of the blog I explained how to use it with a separate browser and an additional plugin. These extra components are not needed anymore:

Connect through a socks proxy without plugins

Instead of using a separate browser for example Firefox with a proxy plugin you can use a profile and start directly with the proxy session. The proxy settings are stored in a separate profile, which you can reuse or create variations for different environments. Your default browser profile is untouched, which means you can use your browser to continue browsing the internet as you are used to.

# MacOS: "/Applications/Google Chrome.app/Contents/MacOS/Google Chrome" --user-data-dir="$HOME/chrome-proxy-profile" --proxy-server="socks5://localhost:1337"

In this example a new profile is created under chrome-proxy-profile and the sock proxy is connection to the local port 1337. This separate profile does not contain anything from your normal profile, so extra extensions (pop-up blockers, password managers, etc) and bookmarks are not available.

I would suggest this as a quick ‘n dirty solution, when you don’t feel like configuring stuff.

For other operating systems check the GCP connection securly link.

Configure proxy using PAC

In the previous setup all the connections and websites you open within that browser profile go through the proxy. So if you open www.xebia.com, all this traffic will be executed on the remote machine and forwarded to you. You can whitelist some domains to not use the proxy, but this is not ideal. Some proxy plugins can automatically switch based on the domain automatically: Foxy Proxy for Firefox or Proxy SwitchyOmega for Chrome.

An alternative approach is to configure a system-wide automatic proxy configuration using PAC. This way you can use your normal browser profile (with your favorite add-ons, extensions and bookmarks) and use the socks proxy. Win-Win!

Proxy-Auto-Config is a small javascript file which switches proxies based on the URL (domain). You can use this to automatically enable the socks proxy for certain domains. This approach is most effective when you have multiple machines which are part of a domain, for example *.cloud.myclient.nl. You can save and reference the PAC file locally (~/autoproxy_custom.pac):

function FindProxyForURL(url, host) { if (dnsDomainIs(host, "client_x_dev")) { return "SOCKS5 localhost:1337"; } else { return "DIRECT"; } }

- On your Mac, choose Apple menu > System Preferences, then click Network.

- Select the network service you use in the list — for example, Ethernet or Wi-Fi.

- Click Advanced, then click Proxies.

- Select Automatic Proxy Configuration. Enter the address of the PAC file in the URL field:

file://~/autoproxy_custom.pac - Optionally you can bypass domains:

*apple.com

Firefox uses the System Settings by default, but you can configure PAC proxy specifically in the Network Configuration settings.

--- end of update ---

Generate SSH key pair

Lets create a new ssh-key pair with a specific name:

# Your machine, if the .ssh folder does not exist in your home, create it with access right 700 ssh-keygen -f ~/.ssh/client_x_cloud_development Generating public/private rsa key pair. Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /Users/abij/.ssh/client_x_cloud_development. Your public key has been saved in /Users/abij/.ssh/client_x_cloud_development.pub. The key fingerprint is: SHA256:LTEV93R8l9ScJyBMwyj5sMVjVK+G/vzJfOR+CJhgRaM

Two files have been generated:

- client_x_cloud_development: the private key

- client_x_cloud_development.pub: the public key end with .pub

Never give away your private key, it’s stored with permission 600 for a reason!

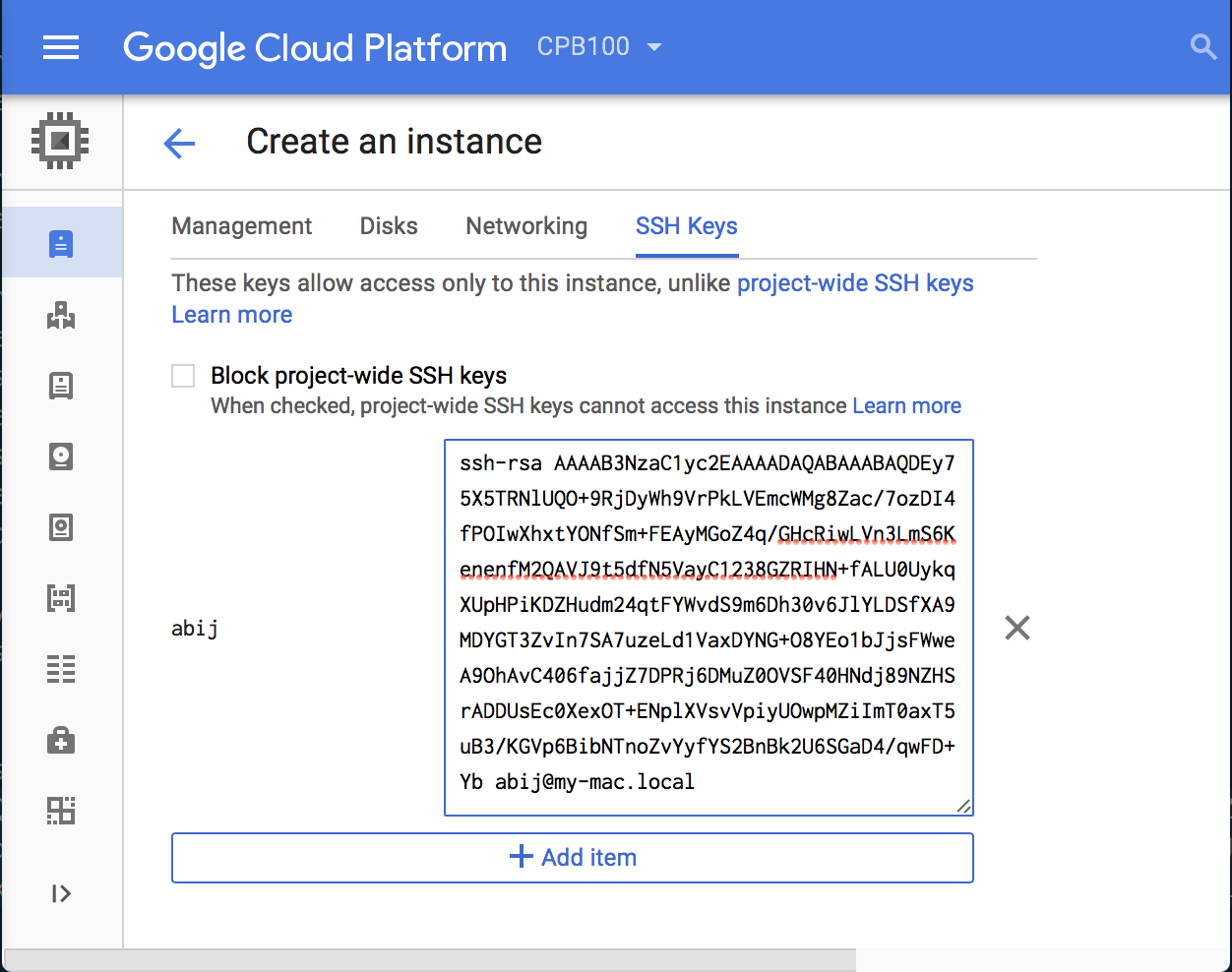

When creating Linux machines, you can provide your public key to authenticate and login. Here is an example how to create a VM-instance in GoogleCloudPlatform with the public ssh-key:

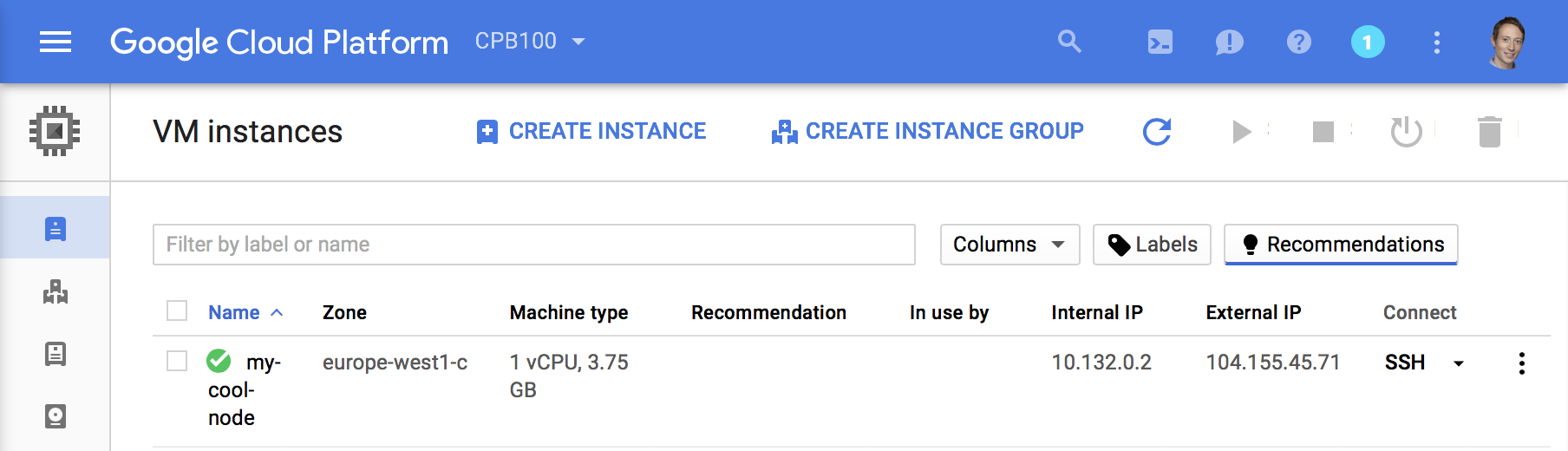

Now spin up the machine and wait a while until the machine becomes available. To connect to the machine we have to lookup its public/external IP-address. As you can see below for this VM it is 104.155.45.71 with the hostname my-cool-node.

Lets connect with SSH to the machine and install a webserver to demonstrate port forwarding.

# on your machine, verbose connection string: ssh -i ~/.ssh/client_x_cloud_development abij@104.155.45.71abij@my-cool-node:~# # on remote-machine, install a webserver sudo apt-get install -y apache2 # sudo yum install -y apache2 # Now your apache-server listens on port 80 for http traffic. # You see (-l listen -t tcp -n numbers): ss -ltn State Recv-Q Send-Q Local Address:Port Peer Address:Port LISTEN 0 128 *:22 *:* LISTEN 0 128 *:80 *:* LISTEN 0 128 :::22 :::*

SSH tunnel, single port forward

By default the website is not accessible over public internet (http://104.155.45.71:80): if you have a private cluster you don’t want to expose it publicly. If we want to view the website on the server we can tunnel the port through the SSH-session.

SSH tunnel with -L [MyPort]:[AfterTunnel_address]:[DestPort]

# on your machine, verbose connection string with tunnel

ssh -i ~/.ssh/client_x_cloud_development abij@104.155.45.71 -L8080:localhost:80

Now we can access the website with the address: http://localhost:8080 going to localhost 8080 in the tunnel. After the tunnel to localhost port 80.

To make your life better, we can configure this connection string in the ssh-config file.

# your machine, edit your SSH-config file vim ~/.ssh/config host client_x_dev HostName 104.155.45.71 IdentityFile "~/.ssh/client_x_cloud_development" User abij LocalForward 8080 localhost:80

Now we can connect using: ssh client_x_dev from your machine.

Make your linux-node more secure by disallowing username/password login. You can check the config of the ssh daemon on the remote linux node

/etc/ssh/sshd_configmust have ChallengeResponseAuthentication and PasswordAuthentication set to no. This is default for cloud machines created with public-key-login.

This works nice for a single port on one machine, but a cluster consists of multiple servers with multiple ports, with links to each other. The links will not work with this setup, because we are using localhost / 127.0.0.1 in our browser. For this scenario we can use the socks proxy.

Socks Proxy

A socks proxy will forward all the ports and can forward the DNS-lookups. All traffic will be transported through the SSH-connection and is thereby encrypted when the proxy is enabled. All requests go through the tunnel! You should not internet in the same browser window with the socks proxy enabled. You should have a separate window, or like me a different browser for internet.

By default it will forward DNS-lookups, connect to 127.0.0.1 on port 1337. Lets change the ssh config for DynamicForward instead of LocalForward to enable the sock-proxy.

SSH DynamicForward with command-line option -D [localport]

# your machine, ssh config file: ~/.ssh.config host client_x_dev HostName 104.155.45.71 IdentityFile "~/.ssh/client_x_cloud_development" User abij DynamicForward 1337

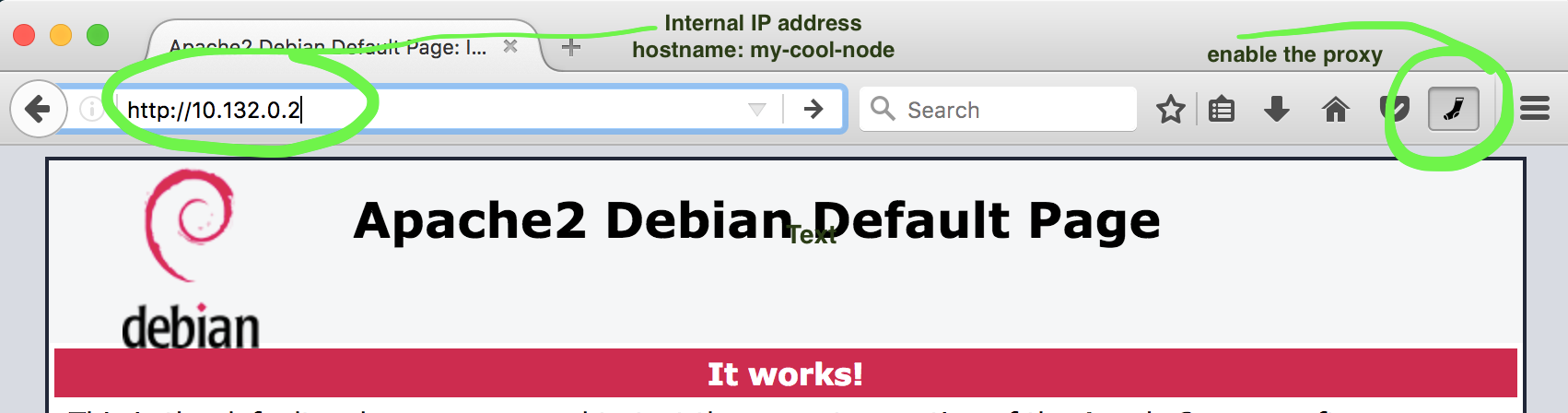

When you connect to your linux node with ssh client_x_dev you are ready to go! You can now access the internal ip-addresses and internal DNS-server names to access your websites. Internal links between servers also work!

Connect using Proxy plugin

- Use a separate browser, Firefox for example

- Install a proxy plugin (I like Toggle Proxy)

- Configure the plugin to use SOCKS5 through

localhostport1337and resolve DNS remote enabled if possible. - Navigating to private IP addresses work through the proxy!

In the 2018 December update I use Chrome with the custom profile to start with the socks proxy enabled by default.

Conclusion

When creating cloud resources or Linux servers in general you should prefer SSH-keys with a passphrase over password login. Simplify your ssh connections with the ~/.ssh/config file.

If you have a single port you want to access you can use port forwarding with LocalFoward in your config file. You can reach the port by using localhost on your browser. A limitation is that you cannot use DNS-lookups, link to other nodes don’t work and have to add every host/port as a separate entry the ssh-config file.

With the DynamicForward we can use a socks proxy to enable these features. This works very well with a cluster in the cloud without public internet access. The communication between the browser and the remote-machine is encrypted.